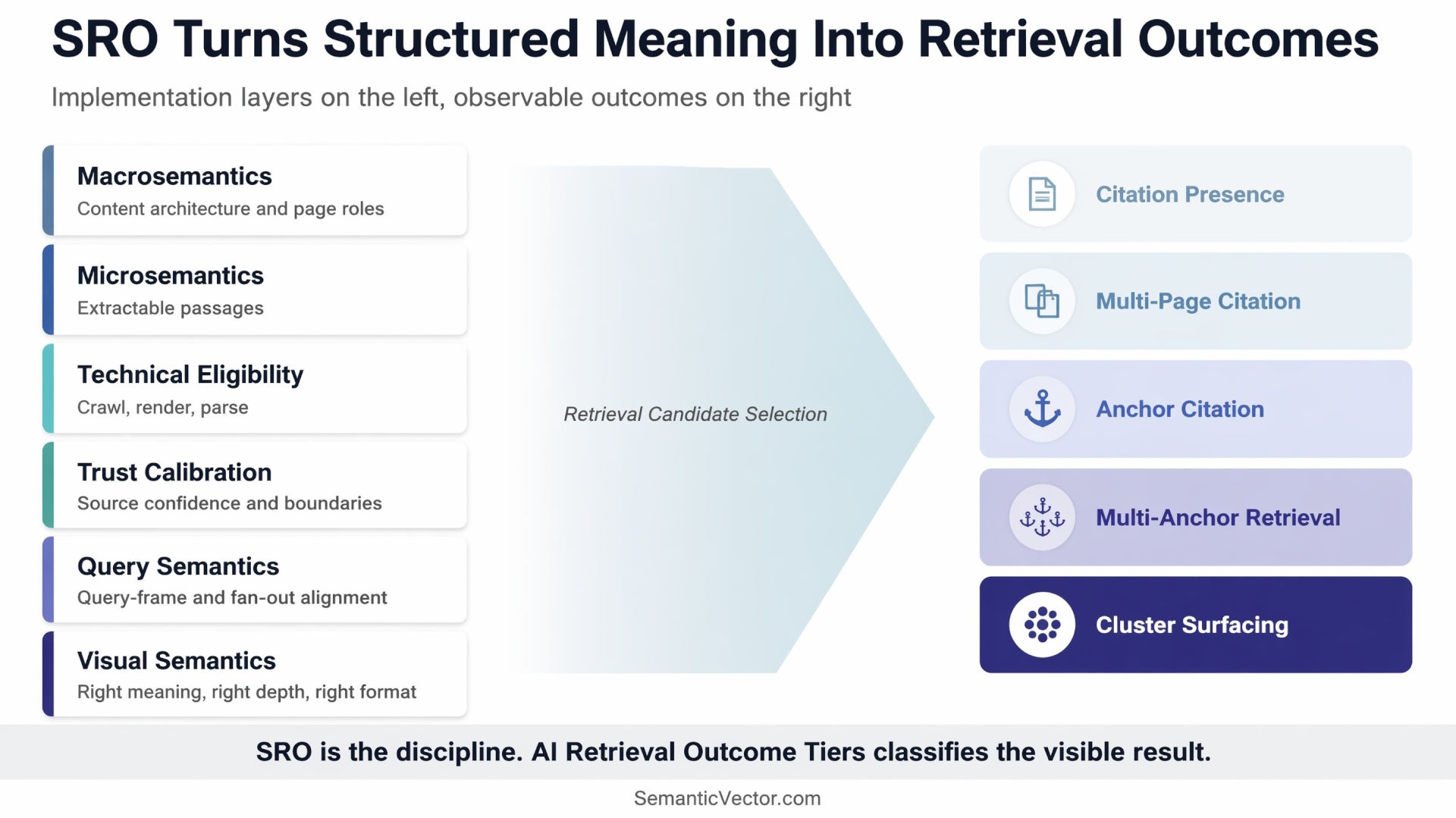

A performance framework for measuring Semantic Retrieval Optimization outcomes, from basic citation to cluster-level surfacing inside AI-generated search answers.

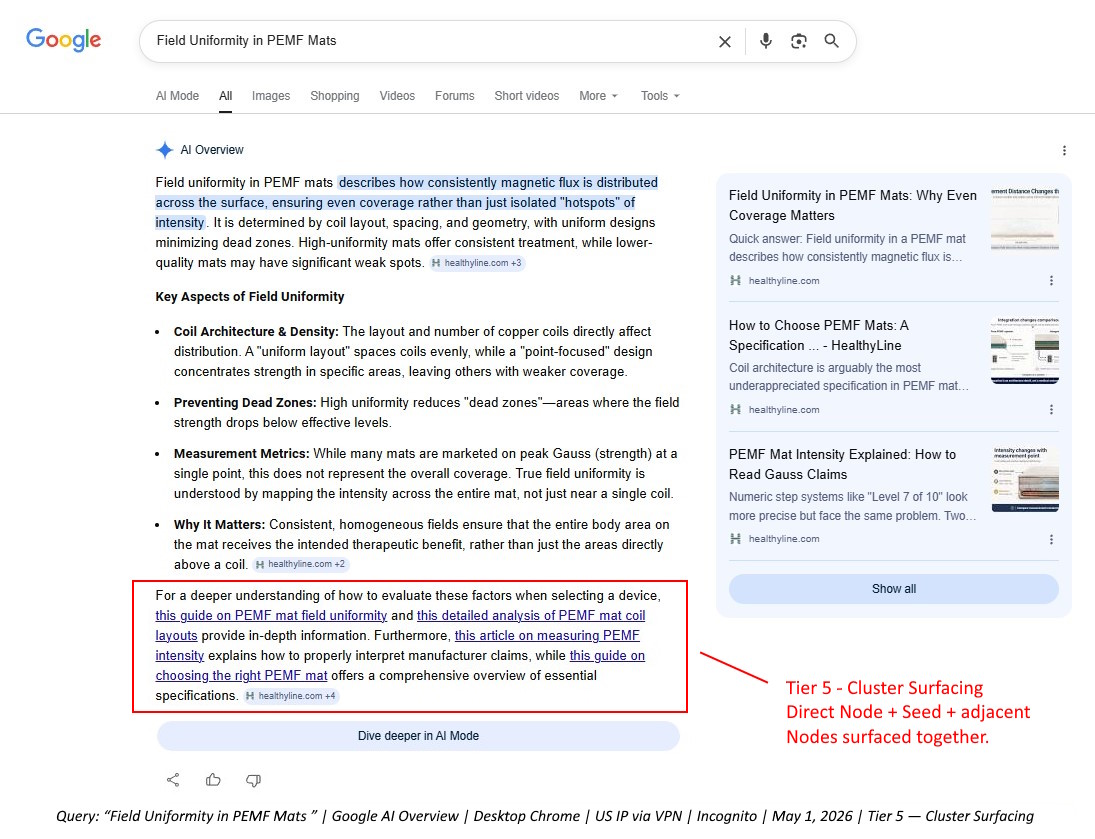

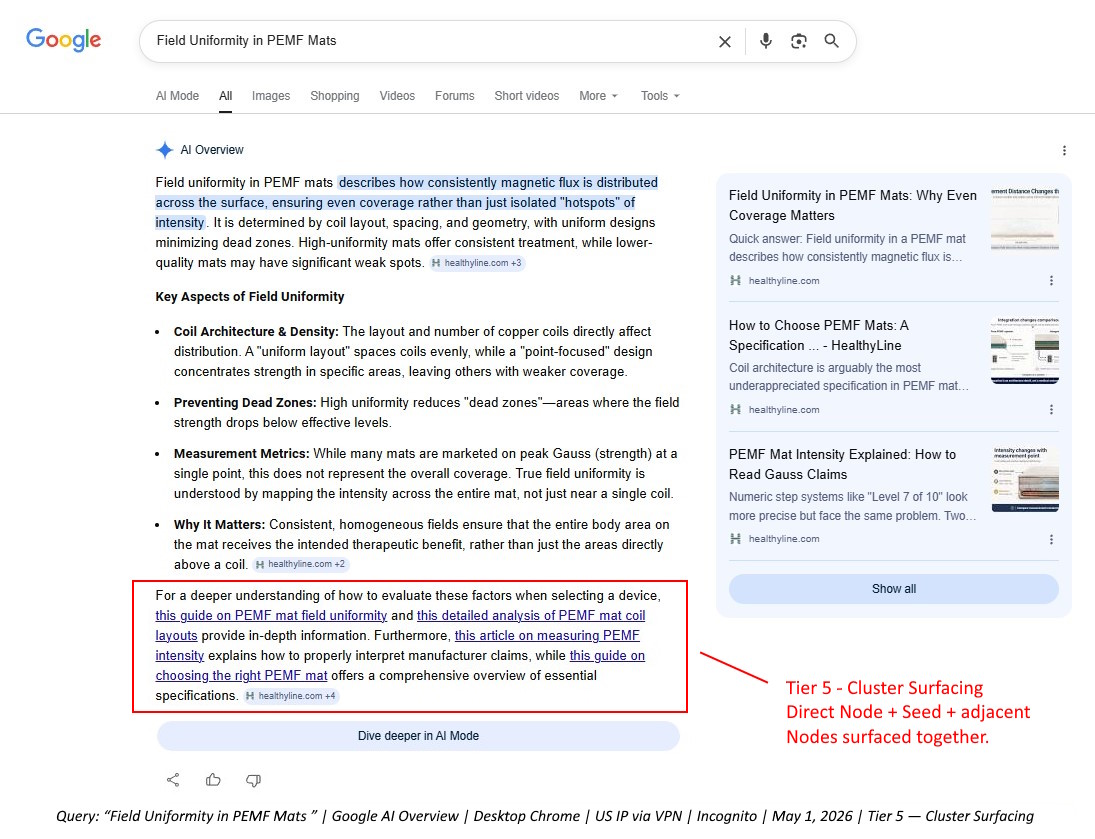

This case study introduces the framework through a real AI Overview example from a technical content cluster in the PEMF mat category. The example comes from HealthyLine, but the brand is not the main point. The main point is the retrieval behavior: Google did not only show one article as a source. In one observed result, it surfaced multiple pages from the same topical cluster as a connected source set.

Built on Semantic SEO, Extended for AI Retrieval

This framework did not emerge in isolation.

My work on Semantic Retrieval Optimization (SRO) is built on the Semantic SEO foundations developed by Koray Tuğberk Gübür, especially the shift from keyword-first SEO to entity-first, topology-aware content architecture. Koray’s work made the core idea clear: a website should not be treated as a collection of isolated pages. It should be built as a connected semantic structure where topics, entities, intents, and page roles reinforce one another.

That foundation matters directly here.

The HealthyLine cluster was not built as a set of disconnected articles. It was built as a Semantic Content Network, with a broader Seed page, focused Node pages, source-context control, and internal links that clarified how each page supported the larger topic.

SRO extends that foundation into the AI retrieval layer.

In traditional Semantic SEO, the central question is often:

Does the search system understand this site as a strong topical source?

In SRO, the next question is:

When an AI system assembles an answer, is this source easy enough, safe enough, and useful enough to retrieve?

That is the difference this case study focuses on.

Koray’s framework gives the architectural foundation: topical authority, semantic structure, and page-role relationships.

SRO adds the retrieval engineering layer: passage extractability, retrieval cost reduction, technical eligibility, trust calibration, query fan-out alignment, and claim-boundary control.

AI Retrieval Outcome Tiers then adds the measurement layer:

What visible retrieval outcome did that work produce?

This is why the framework should be read as an extension of Semantic SEO, not a replacement for it. The goal is not to rebrand Koray’s work. The goal is to show what becomes measurable when a well-built semantic content structure is optimized for AI retrieval and then observed inside AI-generated search answers.

Why Ranking Is No Longer Enough

Ranking position is no longer enough to describe search visibility when AI-generated answers sit above or beside traditional organic results.

In classic SEO, the central question was simple: where does the page rank? In AI search, that question is incomplete. A domain can rank organically and still be absent from the AI answer. It can appear as a single citation. It can appear through multiple supporting pages. It can appear as a visible answer anchor. It can surface multiple visible anchors. In the strongest observed cases, it can have an entire content cluster retrieved as a visible source set.

That is why we need a more precise vocabulary.

AI Retrieval Outcome Tiers measures how a source appears inside AI-generated results, not only where a URL ranks in the traditional blue-link SERP.

AI Retrieval Outcome Tiers was introduced by Sergey Lucktinov, author of Semantic SEO, SRO & AI, as the measurement layer for Semantic Retrieval Optimization (SRO). Its purpose is to classify AI visibility outcomes that traditional rank tracking does not capture.

Why AI Retrieval Needs Its Own Measurement Layer

AI search visibility cannot be measured only through traditional organic position.

BrightEdge’s one-year AI Overview analysis shows why. Their data reports that AI Overviews appeared on 48% of tracked queries, while 52% still had no AI Overview. Their report also shows that when AI Overviews appear, they can occupy more than the entire visible screen, with average AIO height growing to roughly 1,200+ pixels. Most importantly for this framework, BrightEdge reports that only about 17% of AI Overview citations overlap with page-one organic rankings, and summarizes the issue directly: “Ranking #1 ≠ getting cited.”

That means organic ranking and AI answer inclusion are related, but they are not the same outcome.

The AirOps / Kevin Indig fan-out study reaches a similar conclusion from the retrieval side. Their study analyzed 16,851 queries and 353,799 pages across ChatGPT’s retrieval pipeline. It found that retrieval rank was the strongest citation predictor, that heading-query alignment strongly affected citation probability, and that focused pages could outperform exhaustive “ultimate guide” style pages when query relevance was held constant.

The AirOps / Indig study is not a Google AI Overview study, so it should not be treated as a direct explanation of Google’s AIO system. But it is highly relevant because it examines what happens between a query, fan-out searches, retrieved pages, and final AI citations.

Together, these studies point toward the same problem:

AI visibility is not a single state.

A domain can be absent from the AI answer, appear as a single citation, appear through multiple supporting pages, appear as a visible answer anchor, appear through multiple visible anchors, or surface as a connected cluster.

That is the measurement gap AI Retrieval Outcome Tiers is designed to fill.

Why This Is Not Another AI Visibility Framework

Most AI visibility frameworks measure broad outputs: mentions, citations, links, sentiment, share of voice, prompt visibility, or whether a brand appears in an AI-generated answer.

Those metrics are useful, but they often flatten very different outcomes into the same bucket.

A domain that appears once in an expanded source drawer is not in the same position as a domain that appears as the visible answer source. A single cited URL is not the same as multiple same-domain URLs. Multiple same-domain URLs are not the same as a surfaced content cluster where the direct answer page, broader guide, and supporting explainers appear together.

AI Retrieval Outcome Tiers measures a different object: the visible retrieval state of a domain inside the answer surface.

It asks:

| Question | Why it matters |

| Did the domain appear at all? | Measures retrieval presence. |

| Did multiple pages from the domain appear? | Measures domain-level retrieval depth. |

| Did one page appear as a visible answer source? | Measures prominent citation. |

| Did multiple pages appear as visible answer anchors? | Measures prominent domain depth. |

| Did the surfaced pages reflect the site’s own content architecture? | Measures cluster-level surfacing. |

This is why the framework matters for SRO.

SRO is the discipline of making meaning retrievable. AI Retrieval Outcome Tiers is the performance framework that shows what kind of retrieval outcome appeared after that work.

The framework does not replace GEO, AEO, LLMO, Semantic SEO, or AI visibility tracking. It gives those efforts a more precise outcome taxonomy.

A simple distinction:

SEO tracks where pages rank. AI visibility tools track whether brands or URLs appear. AI Retrieval Outcome Tiers classifies how deeply and visibly a domain is retrieved inside the generated answer.

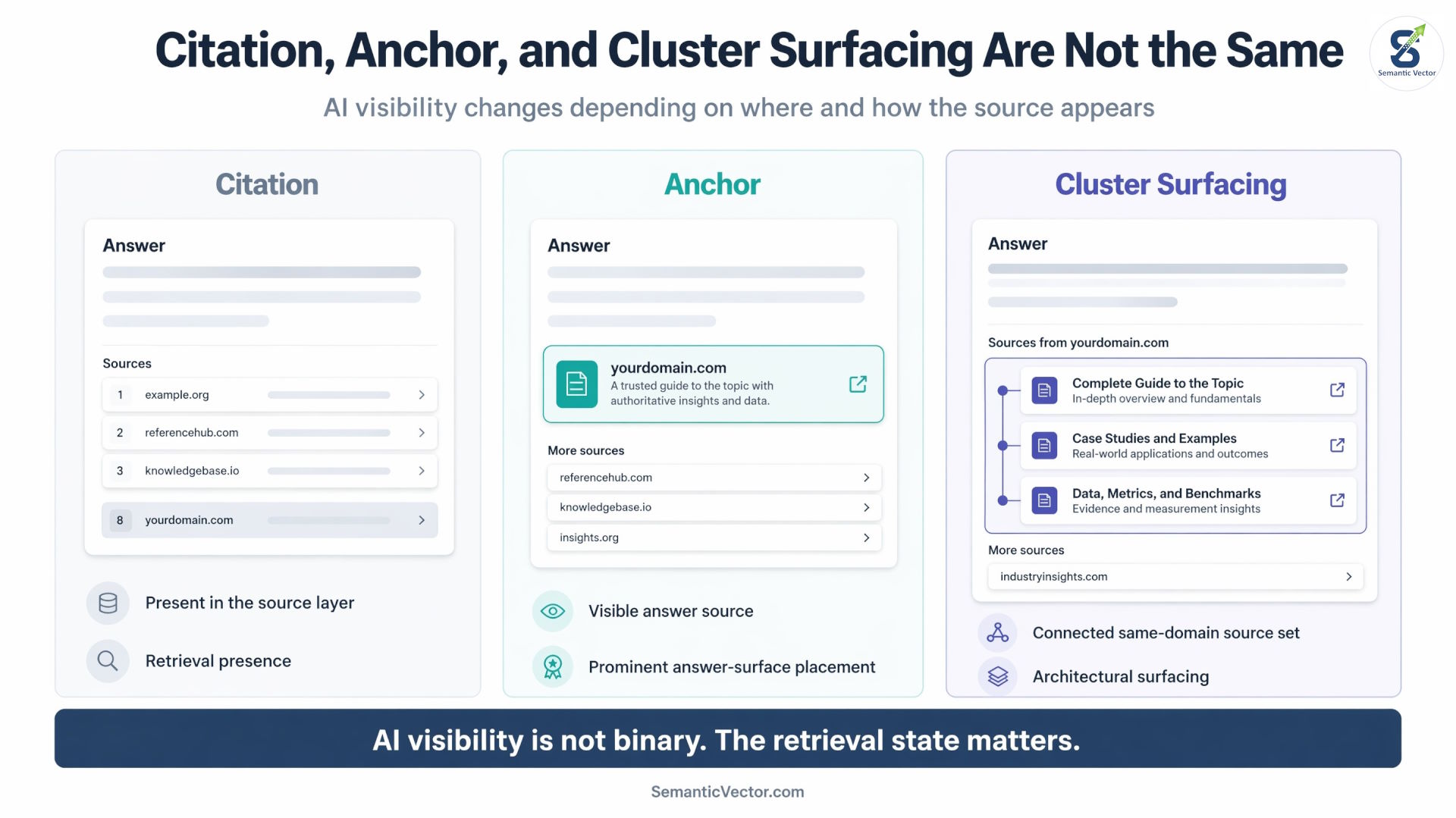

The Core Distinction: Citation vs Anchor

A citation is included as a source. An anchor is a cited source that appears in the primary visible source area attached to the generated answer.

That distinction matters because not all AI visibility has the same value. A URL buried in a source drawer is not equivalent to a URL shown as the visible source card. A domain that appears once is not equivalent to a domain that appears through several related pages. A cluster that surfaces as a connected source set is not equivalent to a random set of same-domain URLs.

A simple way to think about it is this:

| Concept | What it means | Why it matters |

| Citation | The source is included somewhere in the AI result or supporting source layer. | It proves retrieval presence, but not necessarily answer influence. |

| Multi-Page Citation | Multiple URLs from the same domain appear in the source layer. | It shows domain-level retrieval depth before visible anchoring. |

| Anchor | The source is visibly attached to the generated answer. | It shows prominent answer-surface placement, but not proven causal authorship of the answer. |

| Cluster Surfacing | Multiple related pages appear as a connected source set. | It suggests the AI result surfaced multiple pages in a way that corresponds to the site’s content architecture, not only one article. |

The key point is that AI visibility is not binary. A domain is not simply “in” or “out.” It can occupy different retrieval states.

The AI Retrieval Outcome Tiers Framework

AI Retrieval Outcome Tiers classifies observable AI retrieval outcomes. It does not claim to reveal the internal mechanics of Google, ChatGPT, Gemini, or any other AI answer system. It classifies what practitioners can document in the answer interface.

| Level | Tier name | Observable requirement | Interpretation |

| Baseline | No Retrieval Presence | No URL from the domain appears in the visible AI answer or source set. | The domain has no observed retrieval presence for that capture. |

| Tier 1 | Citation Presence | One URL from the domain appears somewhere in the AI source set, citation drawer, source carousel, or expanded sources. | The domain earned retrieval presence. |

| Tier 2 | Multi-Page Citation | Multiple URLs from the same domain appear in expanded sources or supporting citation layers. | The domain shows supporting retrieval depth. |

| Tier 3 | Anchor Citation | One URL from the domain appears in the primary visible source area attached to the generated answer before source expansion. | The domain earned prominent citation. |

| Tier 4 | Multi-Anchor Retrieval | Multiple URLs from the same domain appear in the primary visible source area or as visible answer anchors. | The domain shows prominent retrieval depth. |

| Tier 5 | Cluster Surfacing | Multiple URLs from the same declared topical cluster appear, usually including a direct Node plus a broader Seed, guide, hub, or adjacent supporting Nodes. | The AI answer interface surfaced the domain as a connected source set. |

The tiers move from presence, to domain depth, to visible prominence, to prominent domain depth, to architectural surfacing.

This order is based on retrieval-state progression. Multi-Page Citation comes before Anchor Citation because it shows the domain being retrieved more than once, even if those citations are not yet visible answer anchors. Anchor Citation comes next because it adds visible answer-surface prominence.

The safest use of this framework is diagnostic:

This query showed Tier 5 Cluster Surfacing in this capture.

Not:

This domain owns Tier 5 permanently.

Minimum Test for Tier 5: Cluster Surfacing

Not every same-domain multi-citation should be classified as Cluster Surfacing.

A capture should only be classified as Tier 5: Cluster Surfacing when most of the following are true:

| Requirement | Why it matters |

| Multiple URLs from the same domain appear in the AI answer source set. | Confirms the outcome is beyond single-page citation. |

| The URLs belong to the same declared topical cluster. | Separates Cluster Surfacing from random same-domain citation. |

| At least one surfaced page answers the direct query. | Confirms there is a direct Node or direct answer asset. |

| At least one surfaced page provides a broader Seed, guide, hub, or decision framework. | Confirms the source set includes broader architecture, not only narrow pages. |

| The surfaced pages map to different query fan-out roles. | Confirms the URLs are not duplicates of the same answer. |

| The capture is documented with query, date, location or VPN state, device type, and screenshot. | Makes the classification auditable. |

Tier 5 does not prove internal causality. It classifies an observable interface result where the surfaced URLs mirror the site’s declared topical architecture.

That distinction matters. Cluster Surfacing is not a claim that we can see Google’s internal reasoning. It is a claim that the visible answer interface surfaced the domain in a way that corresponds to the site’s own content architecture.

Capture Confidence Levels

AI-generated search interfaces fluctuate. A tier should be assigned to a specific query capture, not permanently to a domain.

| Confidence level | Meaning |

| Observed | Seen once in one environment. |

| Repeated | Seen across three or more repeated checks. |

| Cross-context | Seen across different devices, locations, VPN states, or account states. |

| Persistent | Seen across multiple dates. |

| Competitive | Seen while competitor source sets are also tracked. |

A clean reporting format looks like this:

| Field | Example |

| Query | Field Uniformity in PEMF Mats |

| Observed outcome | Tier 5 — Cluster Surfacing |

| Confidence | Observed / Repeated / Cross-context / Persistent |

| Capture details | Date, location, device, browser state, screenshot |

| Visible source set | Direct Node, Seed page, adjacent Nodes |

This makes the framework easier to audit and harder to dismiss as screenshot cherry-picking.

Why Tier 5 Matters: Cluster Surfacing

Cluster Surfacing occurs when an AI-generated answer surfaces multiple pages from the same declared topical cluster in a way that reflects the site’s own content architecture.

This is the highest tier because the AI result is not merely citing one page, citing multiple same-domain pages, or showing one visible anchor. It is surfacing a connected source set. In practice, this can look like a central guide appearing alongside supporting explainers that answer adjacent sub-questions.

For example, a technical query about field uniformity may need several smaller answers:

| Sub-question | Possible supporting page type |

| What is field uniformity? | Direct Node explainer |

| Why does coverage consistency matter? | Same direct Node |

| How is this different from intensity? | Intensity Node |

| What causes uneven coverage? | Coils or field distribution Node |

| How should this affect buying evaluation? | Seed buying guide |

When the AI Overview surfaces those related pages together, the result is no longer just a page citation. It becomes a visible source-set pathway.

That is Cluster Surfacing.

Tier 5 as Topical Authority in Action

Tier 5 Cluster Surfacing is one of the clearest visible examples of topical authority in action.

In traditional SEO, topical authority often means that a site has enough depth, consistency, and internal support around a topic to be treated as a strong source for that subject. In SRO, that same idea becomes more observable inside AI-generated answers. The question is no longer only whether the site ranks for many related queries. The question is whether the AI answer surface retrieves the site as a connected source set.

This is where Koray Tuğberk Gübür’s Semantic SEO work is important. Koray’s framework pushed SEO away from isolated keywords and toward semantic structures: how concepts, entities, intents, and pages relate to one another across the entire site. In that model, topical authority is not created by publishing more pages randomly. It is created by building a coherent meaning structure that search engines and AI systems can understand.

In the HealthyLine example, the AI Overview surfaced the direct Field Uniformity Node, the broader How to Choose PEMF Mats Seed, and adjacent specification Nodes. That does not prove Google “understood” the SCN exactly as designed, but it does show a visible retrieval pattern that matches the site’s topical architecture.

That is why Tier 5 matters. It turns topical authority from an abstract SEO concept into an observable AI retrieval outcome:

The source is not only present. The source’s topical structure is being surfaced.

What Is SRO and Where It Fits in the Process

Semantic Retrieval Optimization, or SRO, is the practice of structuring content so AI systems can identify, retrieve, trust, and reuse the right passages, entities, and page relationships when assembling answers.

I use the term SRO because the work required to reach higher AI retrieval outcomes is broader than any one current acronym. Some of the work still belongs to SEO: crawlability, indexation, internal linking, technical access, and demand alignment. Some of it belongs to Semantic SEO: entity clarity, attribute coverage, topical relationships, and content architecture. Some of it overlaps with GEO, AIO, SXO, LLMO, and other emerging labels because the content also needs to be usable inside AI-generated answers and language-model retrieval systems.

But those labels do not fully describe the core process.

The core process is not simply optimizing for rankings, snippets, AI Overviews, or LLM mentions. The core process is making the right meaning retrievable. That means building a source environment where AI systems can understand the entity, identify the relevant passage, evaluate the claim boundary, connect the answer to adjacent pages, and decide whether the source is safe enough to use.

That is why I call it Semantic Retrieval Optimization.

SRO includes parts of SEO, Semantic SEO, GEO, AIO, SXO, and LLMO, but it is not limited to any of them. It is the discipline of optimizing content, architecture, trust, and technical access for retrieval selection.

SRO includes both on-site and off-site work, but this case study focuses on the on-site layer: the owned content system that AI systems can crawl, parse, retrieve, and cite directly.

In this case study, Tier 5 Cluster Surfacing did not come from one tactic. It came from several on-site SRO layers working together: Macrosemantics, Microsemantics, Technical Eligibility, Trust Calibration, and Query Semantics. Those layers created the conditions for Google’s AI Overview to retrieve not only one page, but a connected source set.

AI Retrieval Outcome Tiers is not the SRO method itself. It is the performance framework for evaluating whether SRO produced visible retrieval outcomes.

| SRO layer | What it tries to improve | Possible visible outcome |

| Macrosemantics | Content architecture and page-role clarity | Multi-Page Citation, Multi-Anchor Retrieval, Cluster Surfacing |

| Microsemantics | Passage clarity and extractability | Citation Presence, Anchor Citation |

| Technical Eligibility | Crawl, render, parse, and access quality | Movement from no retrieval presence into any higher tier |

| Trust Calibration | Source confidence, authority, boundaries, and safety | More stable citation, safer source reuse, and higher-confidence retrieval |

| Query Semantics | Alignment with query frames and fan-out | Better match between surfaced pages and user intent |

SRO asks:

How do we make meaning retrievable?

AI Retrieval Outcome Tiers asks:

What retrieval outcome did we actually earn?

That distinction matters. SRO is the discipline. SCN is one architecture layer inside it. SBD is one governance layer inside it. AI Retrieval Outcome Tiers is the framework for measuring the visible result.

The SRO Hypothesis Tested in This Case Study

The SRO hypothesis is simple:

If a domain structures meaning clearly enough across architecture, passages, technical access, trust boundaries, and query intent, AI systems have less work to do when retrieving and assembling answers.

This case study used a technical PEMF mat content cluster to test that hypothesis in a live search environment.

The implementation focused on five on-site SRO layers:

| SRO layer | Role in the case study |

| Macrosemantics | Build the content network and assign page roles. |

| Microsemantics | Make passages extractable and visually useful. |

| Technical Eligibility | Keep pages accessible, renderable, and parseable. |

| Trust Calibration | Stabilize source identity, authority, and claim boundaries. |

| Query Semantics | Align pages with query frames and fan-out questions. |

The observed outcomes were then classified using AI Retrieval Outcome Tiers.

This case study does not claim to reverse-engineer Google. It documents the retrieval evidence trail after SRO implementation.

The Five SRO Layers Behind the Result

The five SRO layers explain why this case study was not a single-page optimization event.

The result came from a system. Macrosemantics gave the site a map. Microsemantics gave the pages extractable passages. Technical Eligibility kept the pages accessible. Trust Calibration made the source safer to use. Query Semantics matched the way the query expanded into related sub-questions.

In my book I describe these five layers as interdependent systems: Macrosemantics gives structure, Microsemantics gives clarity, Technical Eligibility gives visibility, Trust Calibration gives confidence, and Query Semantics gives connection to user intent.

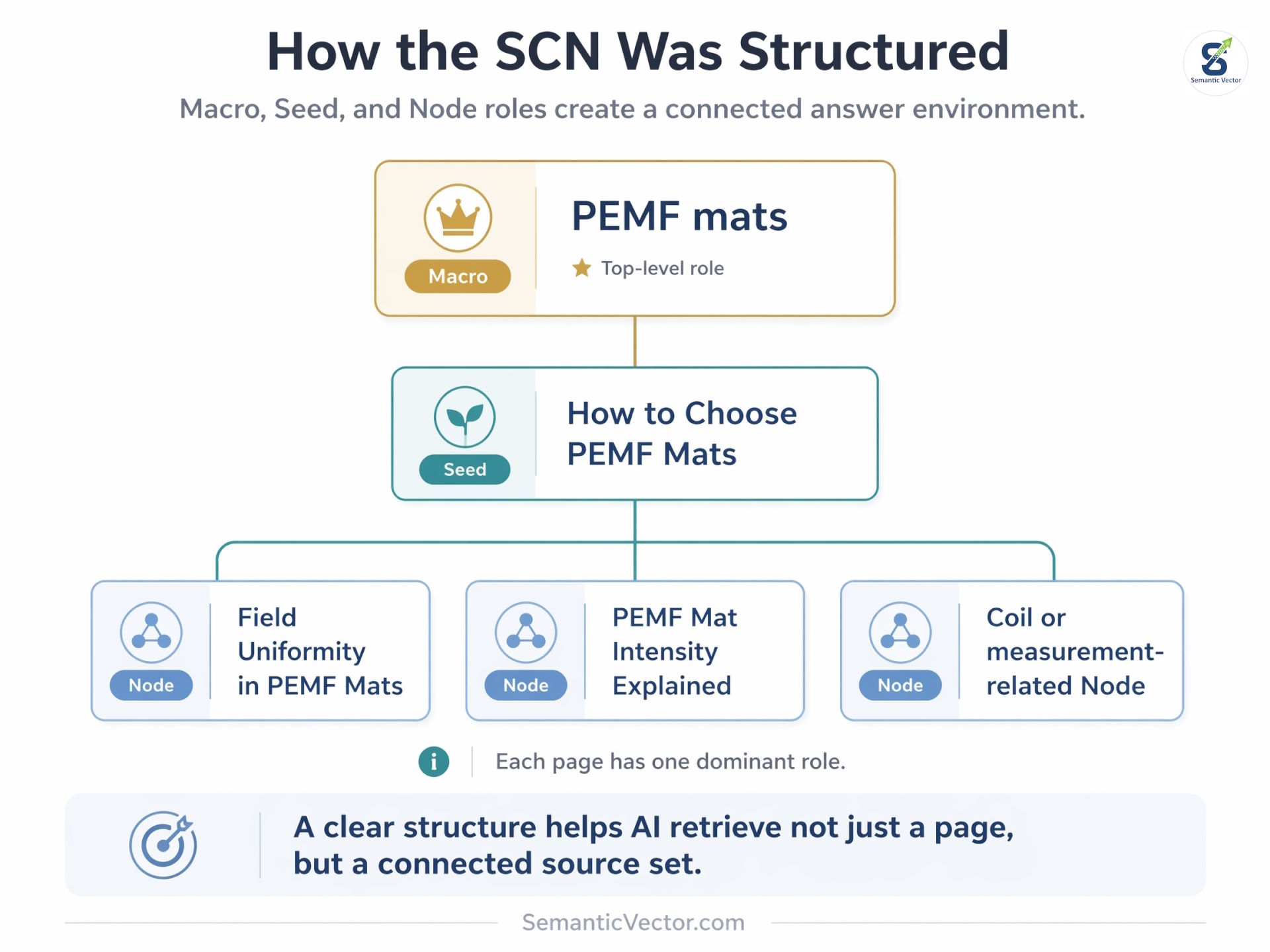

1. Macrosemantics: the content network gave AI a map

Macrosemantics is the website-level meaning structure that shows how topics, subtopics, and supporting ideas connect.

In SRO, Macrosemantics is the layer that prevents content from becoming a pile of isolated articles. It defines the map of expertise: which broad category the site owns, which decision frameworks organize that category, which focused explainers support those frameworks, and how internal links move meaning through the network.

In this case study, Macrosemantics mattered because the AI Overview did not appear to retrieve only one page. It surfaced a direct explainer, a broader choosing guide, and adjacent technical pages together. That is a map-level outcome, not just a page-level outcome.

What the SCN Constitution controls

The SCN Constitution is a public framework I created to make Semantic Content Networks governable, repeatable, and auditable. Its purpose is to prevent SCN work from becoming a loose topical map by defining the rules for page roles, boundaries, authority flow, internal links, URL structure, publishing order, and structural audits.

In the Constitution, a complete SCN is not just a list of article ideas. It is an implementation architecture that includes a Semantic Boundary Declaration (SBD), authority mode, Macro / Seed / Node inventory, Node taxonomy, Bridge and HAN (Horizontal Authority Nodes) inventory where needed, URL governance, internal-link planning, publishing order, and QA audits. It also treats internal links as retrieval instructions because they encode hierarchy, cluster boundaries, authority flow, and support relationships.

The practical idea is simple:

A content network should tell both users and AI systems which page is central, which page is supporting, which page explains a narrow concept, and which page should receive decision gravity.

That is why SCN structure matters for AI retrieval. If the architecture is unclear, AI systems may still retrieve a page, but they have less help understanding how that page relates to the rest of the source. If the architecture is clear, the site can present a connected answer environment.

Macro, Seed, and Node roles

In the SCN Constitution, page roles are not based on article length or keyword volume. Roles are relative to a declared boundary. A topic becomes a Macro, Seed, or Node only inside a specific scope contract, which prevents role drift and accidental overexpansion.

For this case study, the public-facing structure looked like this:

| SCN role | What it does | Example from the case study |

| Macro | Defines the broad category or topical zone the site is committed to owning. | PEMF mats |

| Seed | Organizes a major decision framework or intent corridor under the Macro. | How to Choose PEMF Mats |

| Node | Resolves a focused question that depends on the Seed’s frame. | Field Uniformity in PEMF Mats |

| Node | Explains a supporting technical variable. | PEMF Mat Intensity Explained |

The goal was not to create one oversized PEMF guide. The goal was to build a governed content network where each page had one dominant role.

The Seed handled the broader buying framework. The Nodes handled focused technical variables. Internal links connected those pages through semantic dependency rather than generic “related article” blocks.

Why this matters for Cluster Surfacing

Cluster Surfacing becomes possible when the architecture is clear enough to be retrieved as a source set.

In the observed AI Overview, Google surfaced the direct field-uniformity Node, the broader choosing Seed, and adjacent specification Nodes together. That is the visible sign of Macrosemantics working: the AI result did not only retrieve content depth, it surfaced the content map.

The key point is that Macrosemantics gives AI systems a navigable structure. It shows where the main framework lives, where the focused explanations live, and how those pages fit together.

In SRO terms:

Macrosemantics turns content coverage into a retrievable meaning graph.

2. Microsemantics: the pages gave AI extractable passages

Microsemantics is passage-level clarity. It makes each paragraph, table, list, and definition easier for AI systems to extract and reuse.

Microsemantics treats each paragraph, list, table, and definition as a possible retrieval unit. A strong passage should make sense even when it is extracted from the page, which means each chunk needs a clear subject, bounded claim, and minimal dependency on surrounding context.

In this cluster, the pages were built around extractable answer anchors.

For example, the intensity article used a definition-first passage:

“PEMF mat intensity describes the strength of the magnetic field at a specific point in space, measured in Gauss or Tesla.”

The field uniformity article used the same type of microsemantic construction:

“Field uniformity in PEMF mats describes how consistently magnetic flux is distributed across the surface.”

Those passages are short, bounded, and definition-first. They do not require the full article to be understood. That makes them easier for AI systems to retrieve as answer candidates.

Visual Semantics: why extractable does not mean over-expanded

Visual Semantics is the execution discipline that decides how much information a page should show, where it should appear, and what visual form it should take.

I first encountered the Visual Semantics framing through Koray Tuğberk Gübür’s work. My use of it in this case study is an applied SRO interpretation of that concept, not a claim that I originated the idea. In this project, Visual Semantics became the practical governor that kept Microsemantics from turning into overproduction.

In SRO, more semantic coverage is not automatically better. A page can be technically complete but visually inefficient. It can include valid entities, attributes, and explanations while still making the primary answer harder to identify because the dominant query frame becomes buried.

In testing, pages expanded for maximum semantic completeness sometimes scored lower on visual-semantic clarity than the original focused versions. That confirmed an important SRO rule: Visual Semantics must govern Microsemantic expansion, not the other way around. A page can add valid entities, attributes, and explanations while still becoming less useful if the main answer path becomes harder to see.

That is why Visual Semantics belongs inside Microsemantic execution.

Microsemantics asks:

Can this passage be extracted cleanly?

Visual Semantics asks:

Is the right passage visible at the right depth, in the right format, for this query frame?

The AirOps / Kevin Indig fan-out study supports this distinction. Their data showed that pages with strong heading-query alignment were cited more often than pages with weaker heading alignment, and that moderate fan-out coverage outperformed exhaustive coverage when primary relevance was held constant. The report’s conclusion is especially important for SRO: “A page that nails one question outperforms a page that adequately addresses five.”

That finding matches the practical lesson from this PEMF cluster: a Node should not try to become an ultimate guide.

A focused Node should answer one dominant question clearly, then support that answer with only the adjacent context needed to satisfy the user’s next likely questions. If the page tries to absorb every related fan-out question, it risks role drift. It becomes harder for users to scan, harder for AI systems to classify, and less useful as a precise retrieval asset.

Visual Semantics helped evaluate:

| Visual Semantic decision | SRO purpose |

| What should appear near the top of the page? | Preserve the dominant query frame. |

| Which information deserves a table or contrast block? | Make relationships and boundaries easier to extract. |

| Which sections should stay compact? | Prevent the page from becoming heavier than its role requires. |

| Which adjacent concepts deserve their own Node? | Keep fan-out coverage distributed across the SCN. |

| Which claims need visual separation or caution framing? | Reduce ambiguity and claim drift. |

Visual Semantics prevents three common failures:

- Semantic bloat: adding every related idea until the page loses its role.

- Visual burial: hiding the answer below too much context.

- Role drift: letting a focused Node behave like a Seed or Macro.

The key point is simple:

SCN decides where the fan-out belongs. Visual Semantics decides how much of it belongs on each page.

That is why Visual Semantics works so well with SRO. It protects the page from becoming semantically “complete” in a way that harms retrieval clarity.

In practice, the AI Overview screenshots showed Google using the same kind of definitional language. That is Microsemantics working: the passage survives extraction.

3. Technical Eligibility: the pages were eligible, not perfect

Technical Eligibility asks whether the page can be loaded, rendered, parsed, and considered before AI systems move on.

This layer does not require perfect PageSpeed scores. It requires the page to be eligible enough to enter the retrieval race. Technical Eligibility decides whether the content can enter the retrieval candidate set in the first place. If a page loads poorly, renders unpredictably, hides important content, or creates parsing friction, its semantic quality may not matter because the system may never process it cleanly.

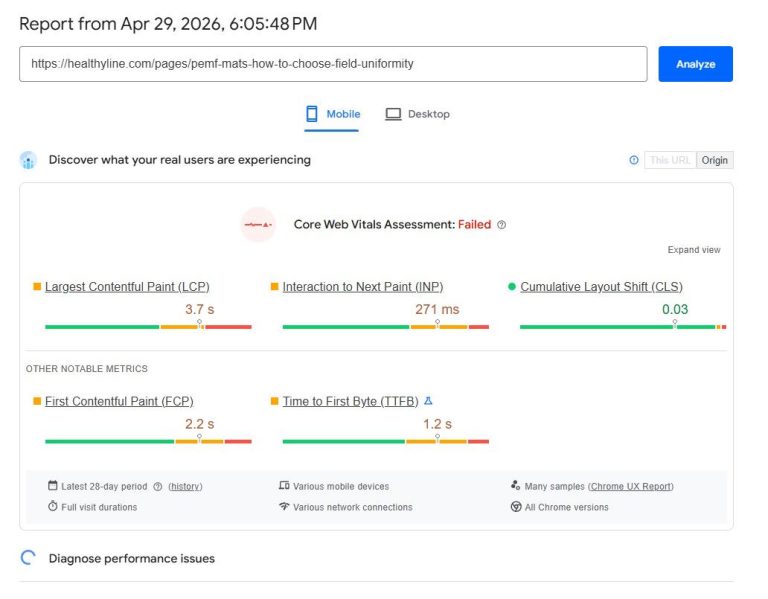

Technical Eligibility snapshot. The page was not technically perfect, but it was accessible and stable enough to remain eligible. Mobile Core Web Vitals failed in this capture because LCP and INP needed improvement. CLS remained strong at 0.03, and the page remained loadable, renderable, and parseable.

The attached screenshot shows:

| Metric | Observed value | Interpretation |

| Largest Contentful Paint | 3.7 seconds | Needs improvement |

| Interaction to Next Paint | 271 ms | Needs improvement |

| Cumulative Layout Shift | 0.03 | Good |

| First Contentful Paint | 2.2 seconds | Needs improvement |

| Time to First Byte | 1.2 seconds | Needs improvement |

This is an important teaching point.

The technical layer was not ideal. I normally prefer much stronger performance for high-priority retrieval pages, especially faster TTFB and LCP. But this result shows why SRO cannot be reduced to speed alone.

This is why the result should not be reduced to technical performance alone. The page did not have perfect Core Web Vitals, but it remained eligible enough for the semantic, architectural, trust, and query-alignment layers to carry the retrieval outcome.

Technical Eligibility is a probability layer. Serious technical failures can remove a page from consideration. But when the page remains accessible, stable, and parseable, the other layers can still influence retrieval selection.

4. Trust Calibration: the source was safe enough to use

Trust Calibration gives AI systems reasons to use a source with confidence. It combines entity identity, source consistency, external validation, schema support, evidence-first writing, domain-level authority, and boundary control so the content is easier to trust and harder to misinterpret.

In this case, trust did not come from content quality alone. The domain already had an established ecommerce footprint, indexed product and category history, brand recognition, and a reasonable authority baseline. That matters because a brand-new domain and an established domain do not start from the same trust position. In SRO, domain authority does not replace source governance or passage quality, but it can reduce the trust gap the system must cross before using the content as a source.

The governance layer came from SBD, or Semantic Boundary Declaration.

SBD is a foundational part of SCN and Microsemantics because it defines the source boundary before pages and passages are created. The SCN Constitution makes SBD mandatory because role assignment depends on a declared boundary: a page is only a Macro, Seed, or Node inside a specific scope contract. Without that boundary, page roles drift, adjacent topics creep in, and the network can accidentally signal that the site owns more than it should.

A simple way to understand SBD is this:

| SBD question | What it controls |

| What does the site own? | The entities, topics, and query frames the source can legitimately cover. |

| What does the site not own? | Topics that create authority drift, claim risk, or irrelevant expansion. |

| What is adjacent? | Related subjects that can be referenced or bridged without becoming core ownership. |

| Which query frames are allowed? | Whether the source can safely answer define, compare, choose, risk, cost, or recommendation-style questions. |

SBD belongs to Macrosemantics because it constrains the network boundary, but it also belongs to Microsemantics because every extractable passage inherits the same boundary. A paragraph about PEMF intensity, for example, should not accidentally become a clinical dosing claim. A paragraph about field uniformity should not turn coverage consistency into a promise of therapeutic benefit. The page-level boundary and the passage-level boundary have to match.

For the PEMF mat cluster, SBD was especially important because the category is health-adjacent. Search demand around PEMF often pulls pages toward claims about pain, sleep, recovery, inflammation, treatment, protocols, and clinical outcomes. Those queries may attract attention, but they can create trust and compliance risk when the source is a product manufacturer rather than a medical provider.

The SBD kept the cluster inside a defensible source boundary.

The content could explain:

- PEMF mat specifications

- product architecture

- measurement context

- field uniformity

- intensity interpretation

- controller transparency

- buyer education

- comparison limitations

The content could not drift into:

- disease-treatment claims

- medical protocols

- clinical dosing recommendations

- universal outcome promises

- “higher intensity means better results” logic

- condition-specific PEMF setting advice

That boundary was not a legal footnote. It was part of the retrieval strategy.

A source that clearly states what it can explain and where it stops is easier for AI systems to use safely. In this case, the cluster did not try to own every adjacent medical, therapeutic, or biohacking claim. It focused on product architecture, specification interpretation, measurement clarity, and buyer education.

The key point is simple:

Trust Calibration is not only about appearing authoritative. It is about making the source’s boundaries clear enough that AI systems can use the content without turning it into something the source never claimed.

5. Query Semantics: the cluster matched the query fan-out

Query Semantics connects the content to the way users and AI systems frame the question.

Query Semantics starts from meaning, not keyword repetition. It looks at the entity, intent, modifiers, context, and implied follow-up questions behind the search, then aligns the content with that full query frame.

A query is not only a keyword string. It is interpreted through entities, intent, modifiers, and context before matching passages are selected. Fan-out is the hidden expansion layer where one visible query can branch into related sub-questions and adjacent intents.

The query Field Uniformity in PEMF Mats is not just one question. It implies several smaller questions:

| Implied sub-question | Cluster page that can support it |

| What is field uniformity? | Field Uniformity Node |

| Why does even coverage matter? | Field Uniformity Node |

| What causes uneven coverage? | Coils Node |

| How is this different from intensity? | Intensity Node |

| How should a buyer evaluate this? | How to Choose PEMF Mats Seed |

That is why the AI Overview result was important. It appeared to mirror the query fan-out. The generated answer did not only use the direct field-uniformity page. It also surfaced adjacent pages that resolved related sub-questions.

That is Query Semantics working at the cluster level.

A secondary effect of this structure is adjacent-query capture. When a Seed and its Nodes define the entity, attributes, measurements, comparisons, and buyer-evaluation frames clearly enough, the cluster can begin ranking for queries that were not directly targeted. This happens because the SCN does not only answer one keyword; it covers the semantic neighborhood around the topic. In this project, that meant some pages gained visibility for related PEMF mat specification and buyer-education queries beyond their exact target terms. That outcome is useful, but it should not be treated as automatic. It depends on the strength of the network, the authority baseline, the query space, and how well each Node preserves its role.

Case Study: A Technical Content Cluster Reaches Tier 5

The case study example comes from a PEMF mat education cluster built for HealthyLine, a wellness-device manufacturer.

The brand is not the point of the case study. The retrieval behavior is.

In one observed Google AI Overview result for the query Field Uniformity in PEMF Mats, the AI Overview surfaced multiple pages from the same domain. The visible source set included the direct field-uniformity page, the broader choosing guide, and adjacent technical explainers.

Suggested caption:

Query: Field Uniformity in PEMF Mats. Observed result: Google’s AI Overview surfaced the direct Node, the broader Seed, and adjacent technical Nodes from the same topical cluster.

Observed classification:

Tier 5: Cluster Surfacing

This capture matters because the AI Overview did not behave like a simple single-page citation. It surfaced several pages that corresponded to different roles inside the SCN.

| Surfaced page type | SCN role | Retrieval function |

| Field Uniformity article | Node | Direct answer to the query |

| How to Choose PEMF Mats | Seed | Broader buying framework |

| PEMF Mat Intensity article | Node | Adjacent technical clarification |

| Coil or measurement-related content | Node | Supporting mechanism context |

This is what Tier 5 looks like in practice. The AI result surfaced not only content depth, but content architecture.

Evidence Table

The following observations classify visible AI retrieval outcomes using the AI Retrieval Outcome Tiers framework.

| Query | Observed tier | Pages surfaced | What it shows |

| Are all PEMF mats the same? | Tier 1: Citation Presence | HealthyLine’s “PEMF Mat Sizes Explained: How to Choose the Right Format” appears in the expanded source panel. | HealthyLine earned retrieval presence as a supporting citation, but it was not a visible primary source in the main AI Overview surface. |

| PEMF Coils in Mats Explained | Tier 2: Multi-Page Citation | HealthyLine’s “How to Choose PEMF Mats” and “What PEMF Mat Specs Matter Most for Comparison?” appear in the expanded source panel. | Multiple HealthyLine pages were retrieved as supporting sources, showing domain-level retrieval depth without visible anchor dominance. |

| How to Choose PEMF Mats | Tier 3: Anchor Citation | HealthyLine’s “PEMF Mat Sizes Explained: How to Choose the Right Format” appears as a visible source card / answer source. | HealthyLine earned prominent citation through one visible answer source connected to the generated answer. |

| PEMF specs transparency | Tier 4: Multi-Anchor Retrieval | HealthyLine’s “PEMF Spec Transparency Checklist,” “How to Choose PEMF Mats,” and “PEMF Frequency Explained” appear as visible source cards, with additional HealthyLine citation markers in the answer text. | Multiple HealthyLine pages appeared as visible anchors, showing prominent same-domain retrieval depth across specification-related pages. |

| Field Uniformity in PEMF Mats | Tier 5: Cluster Surfacing | HealthyLine’s Field Uniformity Node, How to Choose PEMF Mats Seed, PEMF Mat Intensity Node, and related specification/coil-layout pages appear together as a source set. | The AI Overview surfaced multiple pages from the same declared topical cluster in different roles: direct Node, broader Seed, and adjacent supporting Nodes. |

AI Overview composition can vary by location, device, account state, query wording, and time. These screenshots document observed retrieval outcomes. They do not prove permanent ownership of any AI Overview.

Why the Cluster Was Retrieval-Friendly

The cluster was retrieval-friendly because multiple SRO layers worked together.

No single factor explains the result. The cluster had a clear architecture, extractable passages, acceptable technical eligibility, source governance, and strong query-frame alignment.

The pattern looked like this:

| SRO layer | What it contributed |

| Macrosemantics | A Seed-and-Node structure that gave the topic a clear map. |

| Microsemantics | Definition-first passages, tables, and contrast blocks that could be extracted. |

| Technical Eligibility | Pages that were accessible and stable enough to remain in the candidate set. |

| Trust Calibration | Source context and claim boundaries that reduced unsafe interpretation. |

| Query Semantics | Coverage of the fan-out questions implied by the target query. |

The result was a source environment where an AI system could retrieve a direct answer, a broader decision framework, and adjacent technical explanations from the same domain without major terminology conflict.

That is the practical purpose of SRO.

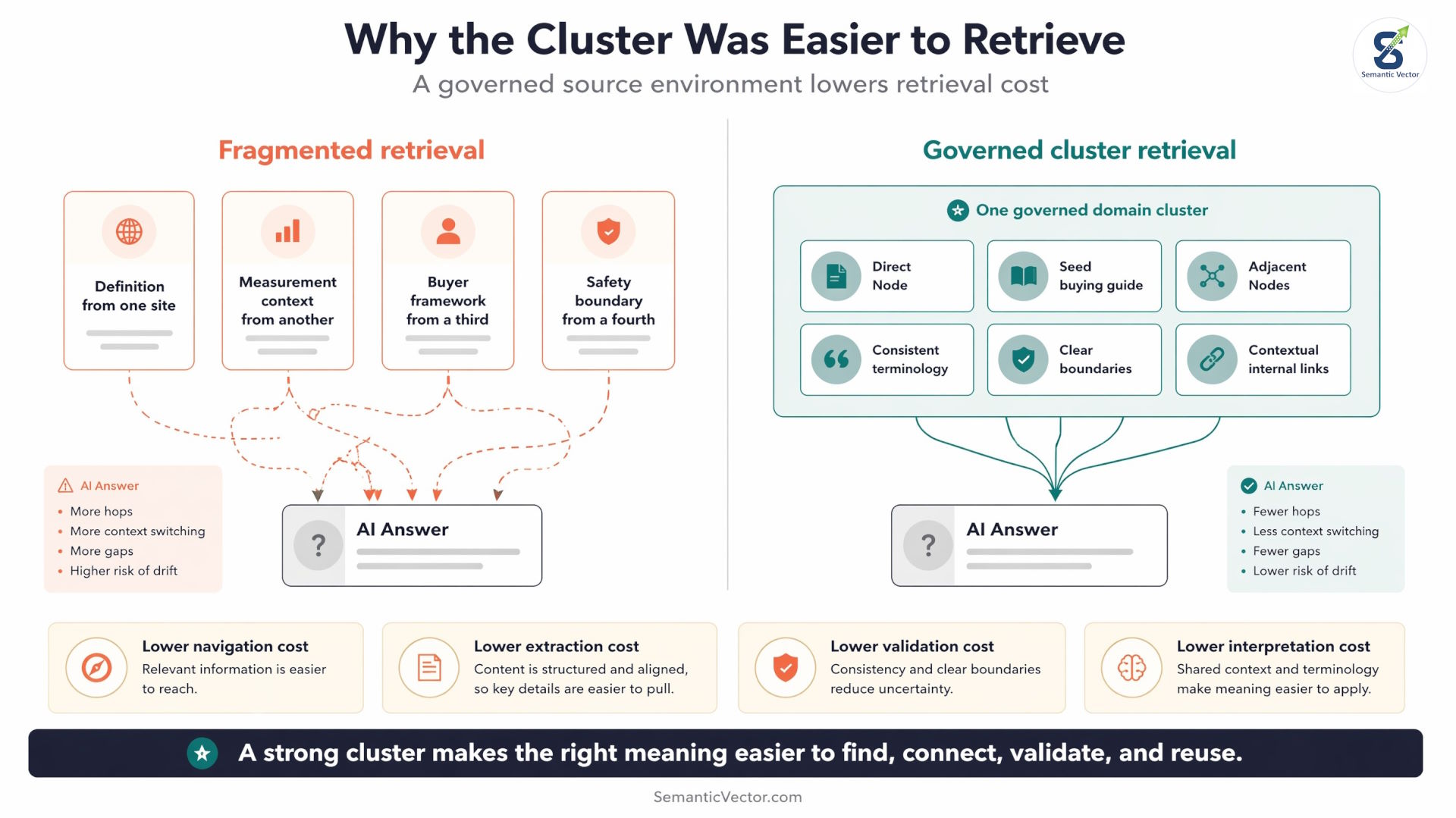

Retrieval Cost: Why the Cluster Was Easier to Use

Retrieval cost is the amount of work an AI system must do to find, compare, validate, and assemble information into a usable answer.

In traditional SEO, we often think in terms of ranking signals. In SRO, we also think in terms of retrieval effort. If an AI system has to pull a definition from one site, a measurement explanation from another, a buyer framework from a third, and a safety boundary from a fourth, the answer becomes more expensive to assemble.

The system has to reconcile terminology, source quality, claim boundaries, and possible contradictions across multiple domains.

A well-built SCN reduces that cost.

In this case, the PEMF content cluster gave the AI system several retrieval advantages:

| Retrieval problem | How the cluster reduced it |

| The query had multiple implied sub-questions | The cluster had focused Nodes for field uniformity, intensity, coils, measurement distance, Gauss, and buyer evaluation. |

| The AI system needed a broader decision frame | The Seed page supplied the buying framework. |

| Technical terms could be interpreted inconsistently | Definitions were kept consistent across the cluster. |

| Health-adjacent claims could create risk | SBD kept the content inside specification interpretation and buyer education. |

| Related concepts needed to be connected | Contextual internal links showed which pages supported which ideas. |

| A single page could become overloaded | SCN and Visual Semantics distributed fan-out coverage across focused pages. |

The key point is not that AI systems are “lazy.” The key point is that retrieval systems need coherent, low-conflict source material. When one domain provides a clean answer path across multiple related pages, it can reduce the cost of assembling the answer.

Each SRO layer reduces a different kind of retrieval cost:

| SRO layer | Retrieval cost it reduces |

| Macrosemantics | Navigation cost: where does this page fit in the topic map? |

| Microsemantics | Extraction cost: which passage answers the question? |

| Technical Eligibility | Access cost: can the page be loaded, rendered, and parsed? |

| Trust Calibration | Validation cost: is the source safe and consistent enough to use? |

| Query Semantics | Interpretation cost: does the content match the actual query frame? |

| Visual Semantics | Presentation cost: is the right meaning visible at the right depth? |

Cluster Surfacing matters because it suggests the system did not need to stitch together a fragmented answer from many unrelated sources. It found a source environment where the direct answer, supporting concepts, and broader decision framework were already organized.

In SRO terms:

A strong content cluster does not only add more pages. It lowers retrieval cost by making the right meaning easier to find, connect, validate, and reuse.

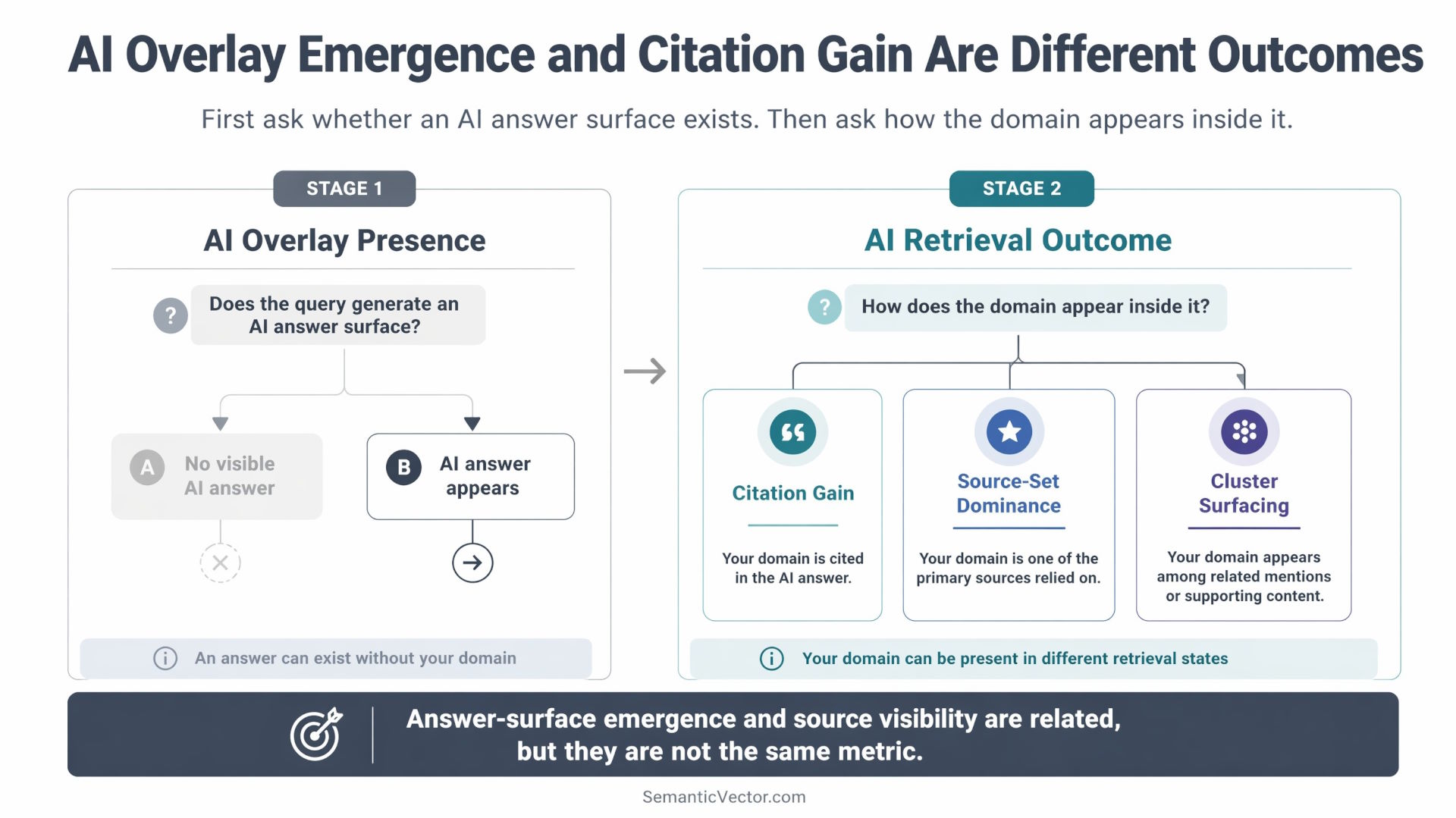

AI Overlay Emergence: when the query gains an answer surface

AI Overlay Emergence occurs when a query that previously did not show an AI-generated answer begins showing one after enough retrievable, coherent, and trustworthy content enters the index.

This is different from winning a citation inside an existing AI Overview. In an existing AI Overview, the system already has enough material to generate an answer, and the competitive question is which sources it cites. In an emergence case, the more interesting question is whether the source environment helped make the answer possible in the first place.

In this case study, some technical PEMF mat queries appeared to move from no visible AI Overview to an AI Overview that cited the new content cluster. That does not prove direct causation. AI Overview behavior changes by location, account state, query wording, indexing state, and Google testing systems.

But the observation is important because it suggests that SRO can do more than compete for existing AI citations. In under-covered query spaces, a governed content cluster may help reduce the uncertainty that prevents an AI answer surface from appearing.

A simple way to separate the outcomes:

| Outcome | What changes | What it means |

| AI Overlay Citation Gain | An existing AI answer starts citing your page or domain. | Your source becomes part of an existing answer surface. |

| AI Overlay Source-Set Dominance | The AI answer cites or surfaces multiple pages from your domain. | Your domain becomes a dominant visible source environment. |

| AI Overlay Emergence | A query that did not show an AI answer begins showing one after coherent content enters the index. | The query may have gained enough retrievable support for an answer surface to appear. |

AI Retrieval Outcome Tiers and AI Overlay Emergence measure different things.

| Measurement layer | Question it answers |

| AI Overlay Presence | Does the query generate an AI answer surface? |

| AI Retrieval Outcome Tiers | If an AI answer exists, how does the domain appear inside it? |

| Retrieval Cost | How hard is it for the system to assemble a coherent answer? |

| SRO Layers | Which parts of the content system reduce that cost? |

This distinction matters. AI Retrieval Outcome Tiers classify source visibility inside the generated answer. AI Overlay Emergence describes whether the generated answer surface appears for the query at all.

The strongest SRO outcome is not only to be cited after the AI answer exists. It is to build a source environment that makes the answer easier to generate, easier to support, and easier to cite.

What This Case Study Reveals and What It Does Not

This case study reveals the public strategy and the observable retrieval outcome.

It shows how SRO concepts can move from theory into a live search environment. It introduces AI Retrieval Outcome Tiers as a way to classify AI visibility beyond traditional ranking. It shows how SCN, SBD, Microsemantics, Technical Eligibility, Trust Calibration, and Query Semantics can work together in a real technical content cluster.

It does not reveal the full implementation stack.

The public framework includes:

- AI Retrieval Outcome Tiers

- SRO as the discipline

- SCN as the architecture layer

- SBD as the governance layer

- the five SRO layers

- the observed case study

- the high-level workflow

The proprietary layer includes:

- scoring models

- prompt chains

- page diagnostics

- internal validators

- workflow automation

- visual-semantic checks

- operational QA systems

- tool-level implementation logic

The frameworks are public because the industry needs better vocabulary for AI retrieval outcomes. The implementation layer remains proprietary because repeatable execution requires tooling, diagnostics, and validation systems beyond the scope of a case study.

Scope Boundary: This Case Study Focuses on On-Site SRO

This case study focuses on on-site Semantic Retrieval Optimization.

That means it examines the parts of SRO that happen inside the owned website: content architecture, page roles, passage clarity, technical eligibility, source boundaries, internal linking, query-frame alignment, and retrieval cost.

It does not attempt to cover the full off-page layer of AI visibility.

That distinction matters because off-page optimization is a separate and important part of how AI systems perceive brands, especially in competitive, commercial, and comparison-style queries. Third-party listicles, Reddit comments, review pages, affiliate roundups, publisher mentions, forum discussions, YouTube transcripts, and external brand co-occurrence can all influence how a brand appears in LLM answers and AI-generated recommendations.

Those tactics are real. They work. In some competitive query classes, they may be one of the strongest ways to affect brand inclusion and framing.

But they are not the subject of this case study.

Within the SRO model, off-page signals still relate to Trust Calibration because they influence external validation, brand co-occurrence, consensus signals, and perceived source reliability. However, off-page SRO is complex enough to deserve its own framework and case study.

This article intentionally focuses on the owned-content side:

| Included in this case study | Outside the scope of this case study |

| SCN architecture | Third-party listicle placement |

| Seed and Node structure | Reddit/forum influence strategies |

| Microsemantic passage clarity | Affiliate roundup engineering |

| Technical Eligibility | Review acquisition or review-site strategy |

| SBD and claim boundaries | External brand co-occurrence campaigns |

| Internal linking | Publisher outreach |

| Query Semantics | Off-site sentiment shaping |

| Retrieval cost reduction | LLM brand-perception seeding |

This boundary keeps the case study focused.

The goal is not to claim that on-site SRO is the only path to AI visibility. The goal is to show that a governed on-site content network can produce observable AI retrieval outcomes, including citation presence, multi-page citation, anchor citation, multi-anchor retrieval, and cluster surfacing.

Off-page optimization is a different layer of SRO. It matters, but it is not required to understand the on-site retrieval outcome documented here.

What This Case Study Does Not Prove

This case study does not prove that content architecture alone caused the AI Overview result.

The safest claim is narrower and more useful: after a governed content network was built and indexed, Google’s AI Overview showed observable retrieval behavior where multiple pages from the same cluster surfaced together.

This case study does not prove:

- permanent AI Overview ownership

- universal reproducibility

- same results across every location or account

- that every domain can reach Tier 5 quickly

- that architecture alone caused the outcome

- traffic, revenue, or conversion impact

- that Google will show the same source set to every user

- that the content alone caused new AI Overviews to appear

- that every technical long-tail query will gain an AI Overview after SCN coverage

- that Visual Semantics has already been fully applied to every page in the cluster

- that AirOps / Indig ChatGPT findings transfer one-to-one to Google AI Overviews

- that SRO deterministically caused every observed AI result

- that a brand-new domain with no authority baseline can reproduce the same speed or retrieval tier

- that off-page SRO, including listicles, Reddit, reviews, and external brand co-occurrence, is unnecessary

- that retrieval tier equals business value

Retrieval tier is not the same as business value. A Tier 5 result on a low-volume educational query may be strategically useful for authority-building, while a Tier 3 Anchor Citation on a high-intent commercial query may be more valuable commercially. AI Retrieval Outcome Tiers measures retrieval state, not revenue, lead value, or conversion proximity.

It does show:

- traditional ranking is incomplete as an AI visibility metric

- AI retrieval outcomes can be classified into observable tiers

- a real domain can show multiple retrieval states across a technical content cluster

- content clusters can surface as source sets

- governed content architecture can support higher retrieval outcomes

- focused Nodes and distributed fan-out coverage align with independent retrieval research

- Visual Semantics is a useful execution governor for preventing Node over-expansion

- AI Overlay Emergence is separate from AI Overlay citation

- retrieval cost is the unifying mechanism across SRO layers

- AI Retrieval Outcome Tiers can function as a performance framework for on-site SRO

- a governed SCN can create visibility for adjacent queries beyond the exact terms directly targeted

That distinction matters. The case study is not a guarantee. It is a documented retrieval event and a framework for classifying similar events.

What AI Retrieval Outcome Tiers Change

AI Retrieval Outcome Tiers changes the reporting question from “did we get cited?” to “what retrieval state did the domain occupy?” That is the difference between output monitoring and SRO performance measurement.

The framework connects implementation to outcome

AI Retrieval Outcome Tiers becomes most useful when it is mapped back to the SRO work that produced the conditions for retrieval.

| SRO work | Visible retrieval outcome it may support |

| Microsemantic answer passages | Citation Presence or Anchor Citation |

| Consistent supporting Nodes | Multi-Page Citation |

| Strong page-role architecture | Multi-Anchor Retrieval |

| Connected Seed / Node structure | Cluster Surfacing |

| SBD and Trust Calibration | Safer source reuse in health-adjacent contexts |

| Technical Eligibility | Movement from no retrieval presence into the candidate set |

| Query Semantics | Better match between surfaced pages and fan-out intent |

The framework does not prove causation by itself. But it gives teams a vocabulary for connecting implementation work to observed retrieval outcomes.

That is why AI Retrieval Outcome Tiers belongs inside SRO. It is not only a reporting label. It is a diagnostic layer.

1. AI visibility is not binary

A domain is not simply cited or not cited. It can move through different retrieval states.

- A Tier 1 Citation Presence outcome means one source from the domain is present.

- A Tier 2 Multi-Page Citation outcome means the domain has supporting retrieval depth.

- A Tier 3 Anchor Citation outcome means one source is visibly attached to the generated answer.

- A Tier 4 Multi-Anchor Retrieval outcome means several pages from the domain appear as visible anchors.

- A Tier 5 Cluster Surfacing outcome means the topical cluster itself is surfaced as a connected source set.

The tiers are ordered by retrieval-state progression, not guaranteed traffic value. A Tier 3 Anchor Citation on a high-intent query may be more commercially valuable than a Tier 2 Multi-Page Citation on a low-volume query.

2. Pages are no longer the only unit of optimization

Pages still matter. But in AI search, the cluster can become the stronger unit of retrieval.

A single page can answer a direct question. A cluster can answer the question plus the fan-out behind it.

That is why SCN architecture matters. The AI system may need the definition, the mechanism, the comparison context, and the buyer framework. If those live in connected pages with consistent terminology, the domain becomes easier to reuse as a source set.

3. Governance becomes part of retrieval strategy

In complex categories, unsafe or unclear claims can weaken retrieval confidence.

This is why SBD matters. A Semantic Boundary Declaration defines what the source owns, what it does not own, what is adjacent, and which query frames are allowed. In health-adjacent categories, that prevents the content from chasing risky demand at the expense of trust.

The goal is not just to rank a page. The goal is to build a source environment that AI systems can retrieve, trust, and assemble.

Verify the Results Yourself

You can check the observed behavior directly, but results may vary.

Search results and AI Overviews can change by location, device, account state, query wording, and Google testing behavior. When checking, do not only look at the blue-link ranking. Look at the AI Overview source set.

Suggested queries to test:

- Field Uniformity in PEMF Mats

- Are all PEMF mats the same?

- PEMF Coils in Mats Explained

- How to Choose PEMF Mats

- PEMF Spec Transparency

- PEMF Frequency Explained

When you check, classify what you see:

| Question to ask | Possible tier |

| Is the domain absent? | Baseline |

| Is one page cited somewhere? | Tier 1 |

| Are multiple pages cited in the source layer? | Tier 2 |

| Is one page a visible answer source? | Tier 3 |

| Are multiple pages visible as answer anchors? | Tier 4 |

| Does the AI Overview surface the cluster as a source set? | Tier 5 |

The important observation is not only whether a page ranks. The important observation is whether Google retrieves one page, multiple pages, or the cluster.

Origin of the AI Retrieval Outcome Tiers Framework

AI Retrieval Outcome Tiers was introduced by Sergey Lucktinov as part of the Semantic Retrieval Optimization framework.

The framework was created to classify AI search visibility outcomes that traditional rank tracking does not capture. It gives practitioners a vocabulary for distinguishing citation presence, multi-page citation, anchor citation, multi-anchor retrieval, and cluster surfacing.

The conceptual foundation of this work comes from Koray Tuğberk Gübür’s Semantic SEO framework, especially the idea that topical authority is built through coherent semantic networks rather than isolated keyword pages. AI Retrieval Outcome Tiers extends that foundation by classifying the visible retrieval outcomes that appear when such networks are selected inside AI-generated answers.

Framework name: AI Retrieval Outcome Tiers

Author: Sergey Lucktinov

Publisher: SemanticVector.com

First published: May 1st, 2026

Primary use case: Classifying domain visibility inside AI-generated search answers

Suggested citation:

Lucktinov, Sergey. “AI Retrieval Outcome Tiers: From Citation Presence to Cluster Surfacing.” SemanticVector.com, [publication date].

Further Reading

This case study is part of the broader Semantic Retrieval Optimization framework.

To study the conceptual foundation, read:

The public frameworks explain the language of SRO. The tooling layer makes the process repeatable.

SRO is not a shortcut for AI visibility. It is a system for making meaning clear, structured, trustworthy, technically eligible, and aligned with the way AI systems assemble answers.

Sergey Lucktinov is the creator of the SCN Constitution and Semantic Retrieval Optimization (SRO) – the first framework to unify Semantic SEO, GEO, AEO, AIO, and LLM-based retrieval principles into a single governance and retrieval optimization model. He holds patents in AI infrastructure and retrieval optimization. He is the author of Semantic SEO, SRO & AI: Get Found, Trusted, and Chosen in the AI Era. His work builds on the Semantic SEO methodology pioneered by Koray Tuğberk Gübür.